Objectives

Project Aim

This project aims to enhance the game experience of 'Yu-Gi-Oh!TCG' with the use of real-time coexistence and interaction in the mixed reality system.

The reason our team wants to do this project is to solve the problem of not being newbie friendly. Yu-Gi-Oh trading card game was launched in 1999, there are over a thousand cards for players, newbie can't know all of the card effect in game, they need to learn a lot from other ways such as internet to learn what are them, therefore newbie can't quickly enjoy the card game. Using our mixed reality system can help new players to enjoy the card game immediately. We decided to create and provide a database with different card information and cooperate with the UI to give some support to players in card battle, also provide important information support in UI such as life point and game phase, let them easily understand the basics and enjoy the game.

Nowadays there are several AR devices that can provide virtual objects and information in real time. Using those devices can reproduce the screens in Yu-Gi-Oh Anime series, letting monsters appear in the real world. Users can interact with monsters by gesture to control them in real time, such as attack and spawn, show the monster fight with cool sensory visual and sound effect feedback to the player, let them have a more immersive and exciting game play.

Project Objectives

To achieve the aim, the project has defined several sub-objectives as follows:

- To create a real-time database

The database would store the value of monster cards and players’ life points. Once the value is changed in the battle (e.g. life points decrease because of enemy’s attack), the data would revise immediately. When players call a monster, the system would search the id of that monster and get all the value of that monster by the id.

- To design monster model and special effect

When players called a monster card, a 3D model of that monster would appear above the card. The monster also has several animations such as appearance, attack and death. Also, some particle effects are used to cooperate with the animations to bring better visual feedback. Moreover, a few sound effects and background music would be added. These animation and special effects would work automatically in suitable timing.

- To setup non-interactive visual interface

Non-interactive visual interfaces display useful information in the battle from the real-time database. For example, the value of ATK/DEF of a monster would be displayed in front of the 3d model of that monster. These visual interfaces only allow players to know the situation of the whole battle clearly, players could not interact with that.

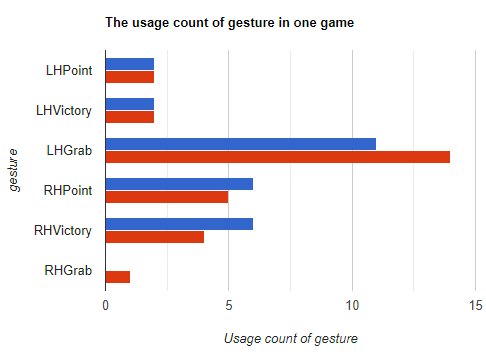

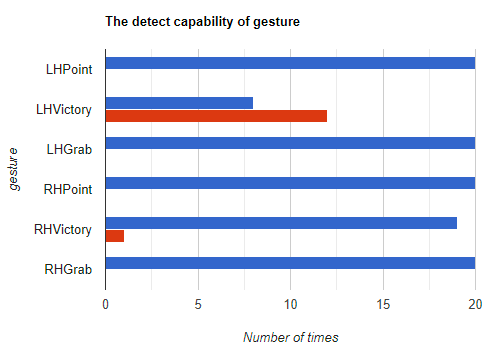

- To setup interactive visual interface

There would be several interactive visual interfaces in the system, those interfaces are used for players to interact with the system. For example, users can point to a rulebook UI, when the system receives players’ action, it will turn on the rulebook. Therefore, interactive visual interfaces can provide some support for players to know what they can do and what they are doing.